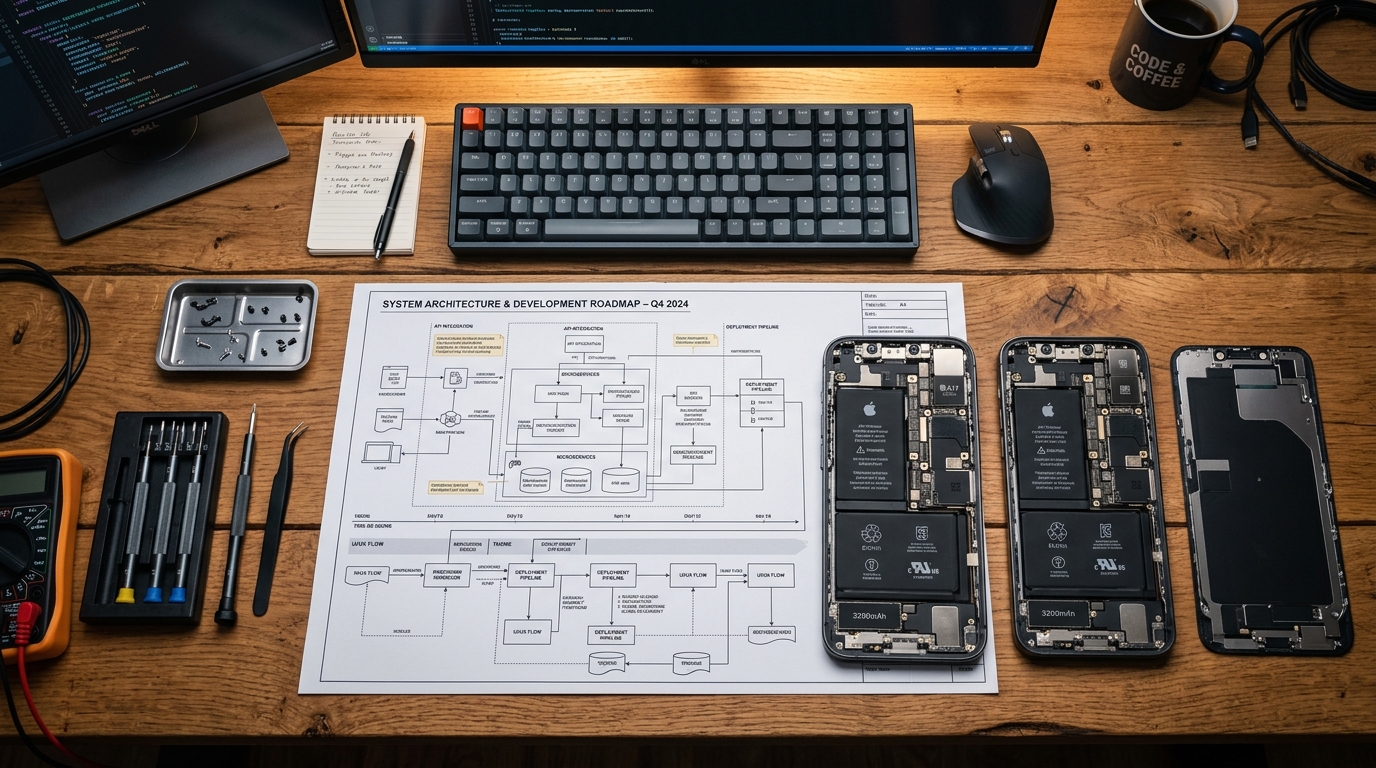

Last quarter, I was running performance benchmarks on a lightweight video synthesis model we had just fine-tuned. Instead of testing it on our flagship laboratory rigs, I loaded the beta onto an aging iPhone 11. It choked, predictably—rendering a three-second clip took nearly four minutes, and the device grew uncomfortably warm. But watching the thermal limits of that specific hardware taught me more about software roadmapping than any market analysis could. At AI App Studio, a studio that develops technology focused software, our vision isn't based on what artificial intelligence can accomplish in a high-powered server farm. It is based entirely on what it can execute in someone's hands.

Why are we building for the edge rather than the cloud?

Edge computing in mobile applications is the practice of running computational models directly on local device hardware rather than relying on external cloud servers for processing. I hold a firm stance on this: the future of mobile intelligence must live on the edge.

Many developers argue that offloading heavy processing to the cloud is the only way to deliver complex features without draining a device's battery or ballooning the application size. While this is partially true for massive foundational models, this dependency introduces severe latency and privacy vulnerabilities. When a user opens an application expecting immediate utility, a three-second network delay to fetch an API response breaks the experience.

Our roadmap deliberately avoids thin cloud wrappers. We prioritize building applications with embedded, purpose-built models that function offline. The true benchmark of our software isn't how smart it is on a gigabit fiber connection, but how reliably it performs on a subway commute with zero signal.

How do dropping production costs reshape mobile software?

To understand our long-term product direction, you have to look at the macro trends in media and utility creation. According to a 2026 Creative Trends Report by LTX Studio, enterprise AI video adoption grew 127% over the past year. At the same time, production costs dropped by 91%, collapsing timelines from days to minutes.

This collapse in cost and time isn't just a corporate metric; it directly influences consumer expectations. If enterprise teams can generate and test synthetic assets in minutes, everyday users expect their mobile tools to offer the same speed. Furthermore, data from Accio's 2026 market analysis projects the broader audio and video equipment market to hit USD 21.46 billion. The line between professional studio hardware and consumer mobile devices is disappearing.

Our response to this data is straightforward. We are not just building tools for consumption; we are building mobile production environments. If a user wants to edit a complex timeline or process high-fidelity audio, they should not be forced back to a desktop environment. The compute capacity is already in their pockets; the software simply needs to catch up.

What happens when you build artificial intelligence for aging hardware?

It is easy to develop an impressive product when your baseline test device is an iPhone 14 Pro equipped with an A16 Bionic chip and ample neural engine cores. The real engineering challenge—and our primary design constraint—is creating models that scale gracefully across older architecture.

A core quotable insight we use internally is this: The best software doesn't demand faster hardware; it gracefully degrades to match the hardware it has. If we deploy an advanced background segmentation feature, it should run flawlessly on an iPhone 14 Plus or a standard iPhone 14. If that same feature is accessed on an iPhone 11, the model should automatically switch to a lighter weight variant. The output might take slightly longer, or use a less aggressive sampling method, but the application will not crash.

This hardware-inclusive approach dictates our entire development cycle. We spend weeks pruning and quantizing models so that they fit within strict memory constraints. By refusing to abandon users with older hardware, we force our engineering teams to write highly optimized code rather than relying on brute-force processing power.

How do utility applications evolve in a hybrid market?

Not every application requires generating video or rendering 3D environments. Much of our roadmap focuses on removing friction from mundane, everyday tasks. A technology roadmap that ignores basic utility is inherently flawed.

Take document management, for example. When we integrate local language models into a PDF editor, the goal isn't to create a flashy chatbot. The goal is to allow a user to extract specific clauses from a fifty-page contract instantaneously, without uploading sensitive legal documents to a third-party server.

The same logic applies to a mobile CRM. Sales professionals do not need an artificial intelligence assistant that tries to write their emails from scratch. They need intelligent systems that automatically categorize incoming client interactions, log offline meeting notes, and surface relevant historical data precisely when a call comes in. In my experience, users reject intelligence that tries to replace their judgment. They readily adopt intelligence that removes repetitive administrative friction.

Where does our technology focused roadmap lead next?

A roadmap is a decision matrix, not a wishlist. As my colleague Doruk Avcı detailed in a recent post on how a technology-focused app studio builds a product roadmap, every technical integration we pursue must map directly to a documented user need.

Over the next thirty-six months, our engineering focus will heavily index on multi-modal local processing. We are moving beyond singular text or image models. We are researching frameworks that allow local mobile applications to process audio, text, and visual inputs simultaneously, drawing context from one another without leaving the device.

By keeping the processing on the edge, aggressively optimizing for varying hardware constraints, and targeting actual user friction rather than industry hype, we ensure our applications remain practical. The cloud will always have its place for mass storage and asynchronous tasks, but the immediate, responsive future of software is happening right on the device.