Early in my career as a DevOps engineer, I spent months optimizing a cloud-native microservice architecture for a media production company. We threw massive server resources at a single problem: reducing audio-processing latency. The AWS bills were staggering, and the infrastructure was highly fragile. Fast forward to today, as we formalize the 2026 product roadmap for AI App Studio, that entire centralized cloud model feels like ancient history. We are no longer pushing data up to a server; we are pushing the compute power directly down to the user's pocket.

At its core, a hardware-first product roadmap is a development strategy that prioritizes running complex models directly on local consumer devices rather than relying on remote servers. This approach forces us to rethink everything from microservice deployment to feature prioritization. As a technology focused software studio that develops mobile applications with artificial intelligence integration, our roadmap is entirely dictated by the rapid decentralization of digital workflows.

For engineering teams and product managers trying to manage the transition away from heavy cloud reliance, building a sustainable application ecosystem requires a structured approach. Here is the step-by-step framework we use to map our long-term technical vision to real-world user friction.

Step 1: Track the Decentralization of Physical Workspaces

Before writing any code, you must understand where the target user is actually working. The traditional definition of a dedicated workspace is collapsing. According to 2026 industry tracking from Accio, the broader audio and video equipment market is projected to reach $21.46 billion, driven heavily by hybrid work and AI shifts. Simultaneously, Circular Studios recently reported that the physical photography studio industry is rapidly migrating toward unstaffed, self-service models to lower operating costs and ensure 24/7 availability.

This data reveals a critical insight: users want professional-grade environments, but they no longer want the overhead of managing them. The physical location matters far less than the software infrastructure supporting it. The studio of 2026 is not a physical room with acoustic foam; it is a decentralized software ecosystem running on mobile edge hardware.

When physical spaces become unstaffed, software has to step in and act as the administrative and creative staff. We monitor these physical industry trends closely because they tell us exactly where digital friction is about to spike.

Step 2: Establish Your Local Hardware Baselines

You cannot build a reliable edge-computing roadmap without setting strict hardware constraints. In cloud architecture, if a process is too heavy, you simply spin up another container. In mobile development, you have to work within the thermal and battery limits of the physical device in the user's hand.

We segment our optimization targets across distinct generational hardware to ensure stability:

- The Legacy Baseline: The iPhone 11 remains our minimum viable baseline for many foundational local tasks. While its neural engine is older, it is still highly capable of handling basic background natural language processing without requiring cloud intervention.

- The Core Standard: We heavily optimize for the A15 Bionic chip found in the standard iPhone 14 and the iPhone 14 Plus. These devices represent the massive middle-market of professional users. They provide enough thermal headroom to run complex document parsing and local audio filtering reliably.

- The Advanced Edge: For high-end, compute-heavy rendering, we target the capabilities of the iPhone 14 Pro. The enhanced memory bandwidth and processor architecture allow us to run multi-modal models entirely offline, replacing tasks that previously required a desktop workstation.

By mapping software features directly to these specific silicon capabilities, we avoid the trap of building applications that drain battery life or crash under load.

Step 3: Map Technical Capabilities to Daily Workflow Friction

A common trap for engineering teams is building a feature simply because the underlying model supports it. A strong roadmap connects technical feasibility directly to a frustrating user bottleneck. As I outlined in my previous post detailing how we build roadmaps around real user needs, every application must justify its existence by removing a specific barrier.

We evaluate new applications using a strict decision framework:

- Latency reduction: Does moving this task from the cloud to the device save the user noticeable wait time?

- Data privacy: Does the workflow involve sensitive client data that is safer kept strictly on local storage?

- Offline reliability: Can the user complete the task in a high-density area (like a conference) or a low-connectivity area (like a remote shoot)?

If an idea does not satisfy at least two of these criteria, it does not belong on our production schedule. We build tools to solve friction, not to showcase algorithms.

Step 4: Overhaul Administrative Bottlenecks Alongside Creative Tasks

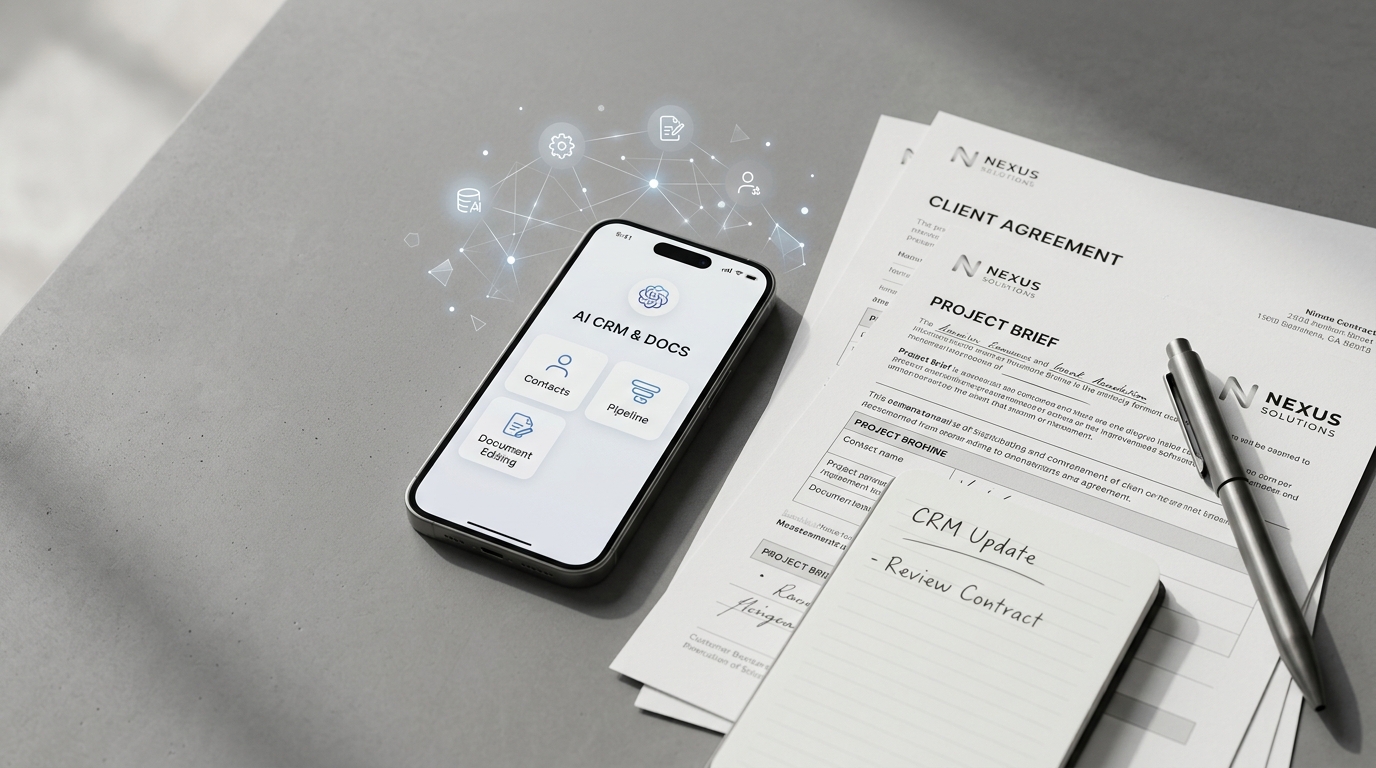

While the media often focuses on generative imagery or video, the heaviest friction for independent professionals is usually administrative. Managing a decentralized business requires handling client communications, contracts, and scheduling without being tethered to a desktop.

For example, mobile professionals often struggle with document management. A standard PDF editor on a phone is typically clunky and requires manual text highlighting or formatting. By integrating local intelligence, we can develop a mobile tool that automatically structures invoice data or extracts key contract clauses locally, keeping sensitive financial details off external servers.

Similarly, traditional desktop customer relationship management tools are too bloated for someone working from a mobile device. A lightweight, on-device CRM can categorize incoming client requests and organize project files based on local context. This is what we mean when we say hardware has outpaced software; the devices are capable of running complete back-office operations, provided the software architecture is built to support it.

Step 5: Adopt a Resilient, Device-Agnostic Architecture

From a system design perspective, moving away from centralized cloud computing requires a fundamental shift in how you write software. You must treat the mobile application not as a thin client viewing a web page, but as an independent microservice node.

When deploying updates or tweaking model weights, we use modular architectures. Instead of forcing users to download massive monolithic application updates, we separate the user interface layer from the inference engine. This allows us to push lightweight, targeted improvements to the specific models handling tasks like audio isolation or text categorization.

This DevOps-inspired approach to mobile development ensures that our applications remain agile. As my colleague Bilge Kurt detailed in her analysis of how everyday mobile hardware is replacing heavy production workflows, efficiency is the defining metric for the next generation of software studios. The goal is to maximize performance while minimizing the application's footprint.

Step 6: Plan for the Long-Term Economics of Edge Computing

The final step in our roadmap planning involves analyzing the long-term economics of software deployment. Cloud computing costs scale linearly with user growth; the more successful your app becomes, the higher your server bills rise. By building a roadmap centered on local device processing, we break that linear cost curve.

This economic reality is what allows a studio to remain agile and independent. Because we are not subsidizing massive server farms, we can allocate more engineering resources toward refining the user experience and optimizing our codebase. It creates a sustainable cycle where the software gets faster, the privacy remains intact, and the user gains total control over their daily digital environment.

Developing a roadmap for 2026 and beyond requires looking past the immediate hype cycle. It means recognizing that the most valuable software of the next decade will be the tools that run quietly, efficiently, and entirely in the palm of your hand.